Software & DevOps

LAMP Stack and Linux System Administration

The majority of my programming experience is in PHP, which I've been using since the late '90s. It was in version 3 at that time, and had just become capable of object-oriented programmming — supporting classes, rather than being restricted to functions only. I have always done this using Linux, Apache, and MySQL.

Running a LAMP stack environment obviously requires learning how to use and administrate a Linux machine, but I don't just run Apache and MySQL. I also run DNS (bind), mail (Sendmail and Exim), file (NFS and Samba), and other system services such as firewalls (iptables). My desktop environment is usually a mix of Windows and Linux, with files hosted on Linux and shared with Samba. Thus, my Linux sysadmin skills have been in development since the late '90s. That includes administrating it for employers, as well as for my own companies. I have used original RedHat, Red Hat Enterprise Linux (RHEL) 7, CentOS, Debian, and Ubuntu.

The debate over which of these OS families is "best" usually boils down to this: Which one is already used in your infrastructure? I'm comfortable using whatever you already have. If you're getting ready to launch a new operation, and you need help selecting the OS to base your IT infrastructure on, I'll be happy to advise you, whether you're considering Linux or any other OS.

This Portfolio

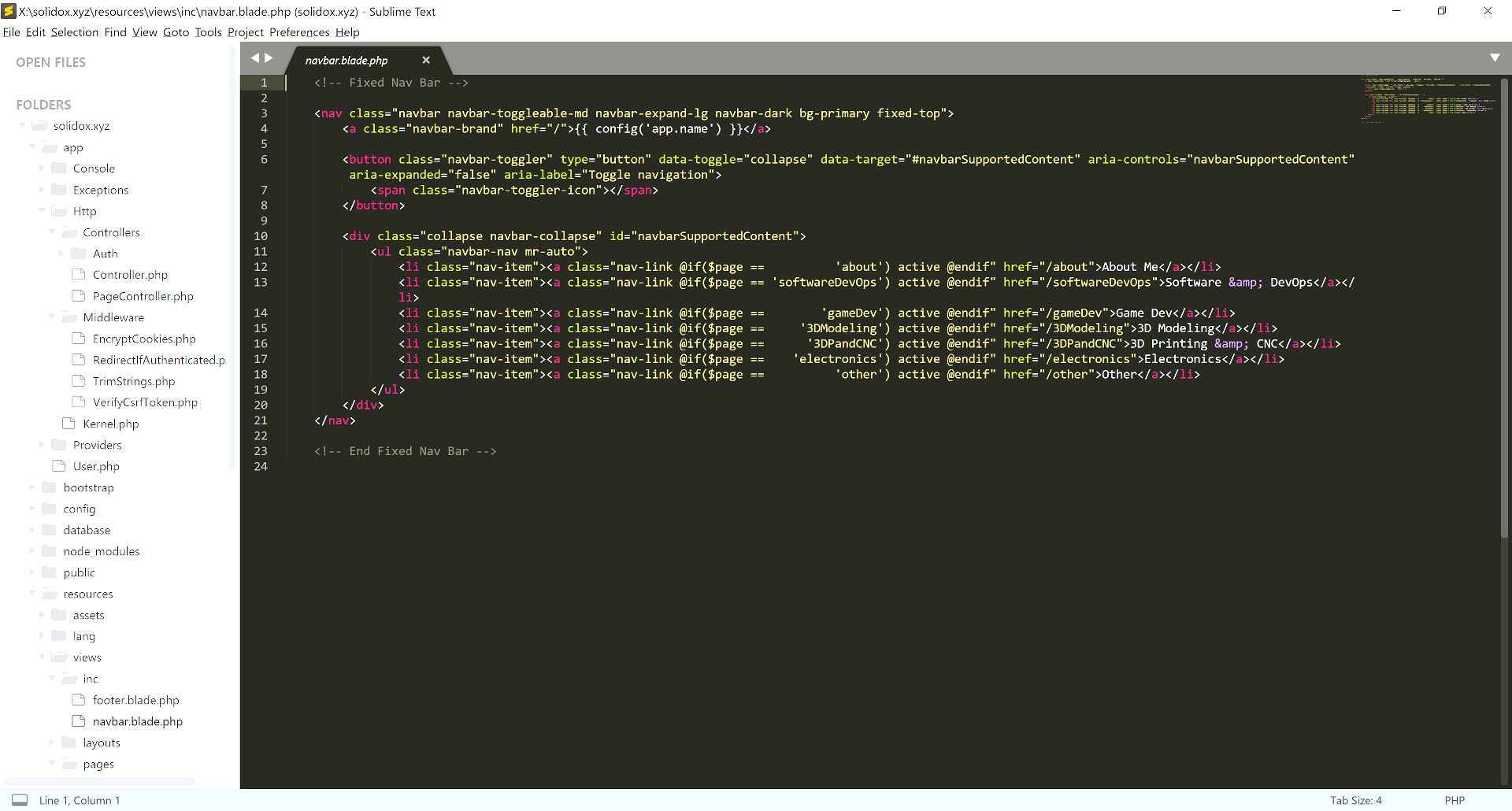

This portfolio site is served from Laravel 5 on PHP 7. There is no CMS. Every page is coded by hand, using Laravel's "blade" templating system. The code and all content were written in a few days in Sublime Text, a great cross-platform IDE. The site uses a slightly customized version of Bootstrap, which makes it very easy to get a site up and running without having to do a lot of custom front end work. I've written web app frameworks with their own custom CSS in the past, but this represents a significant duplication of effort. In my opinion, it's ususally more efficient to spend time puzzling over problems that haven't already been solved.

Laravel 5 ships with version 3 of Bootstrap. I decided to upgrade to version 4. Overhauling the site code to use the new framework properly took a few hours (mostly research), and was not a big deal. I'm using Bootstrap's CDN to source the JavaScript and CSS files because the versions fetched by npm (even after configuring package.json and webpack.mix.js) are broken in a way that disables the navbar menu on mobile devices. (I assume this is a side effect of the code still being in beta.)

The server is running on Amazon EC2. All source code is tracked in a git repo. Configuration management and deployment of the site are both done through Chef.

Internal Tools

I developed internal tools (websites accessible only via corporate intranet) at several of the companies I worked for. For obvious reasons, I can't share any screenshots.

- Customer lookup tool. This tool was used to look up customer accounts, and had the ability to display more account information for reps who were authorized to see it. Access logs were kept to ensure that elevated access was not abused.

- Dialup gear monitoring. This tool queried a number of Nortel CVX1800s over SNMP. These devices terminated one or two DS3 circuits, and could handle many hundreds of concurrent connections. Statistics about active circuits were collected at intervals by a PHP script running from a cron job. Results were stored in a MySQL database. The app generated over a million inserts per month, and that's when I really began to learn how to use MySQL properly. I added indexes to the tables, and that sped up query performance by several orders of magnitude. The tool's website allowed looking up the statistics, and generated 3D graphs of usage over time, including bar graphs and HLCO charts.

- Purchasing tool. This tool was commissioned by the purchasing department. It allowed orders to be created, updated, and processed online. It allowed addition and removal of line items and tracked all changes to orders, so that any changes could be audited as needed.

- Web log analysis tool. The company had a 3rd-party web log analyzer that normally ran as a web app. Internal clients desired to receive automatically-generated reports from the analyzer through email, so they wouldn't have to manually query the app. I figured out how to fool the app into thinking that it was running in a Web server context, rather than from a shell process, using environment variables. I then wrote a parser that converted its output into arrays, and used those arrays to generate the necessary emails.

- Central management console for Internet forums. The company operated dozens of Internet forum sites. Each site ran by itself, without central management. In order to allow for central control, I created a front-end management site, an encrypted RPC stack, and a plugin that ran on the forums. It became instantly possible to change passwords, gather usage statistics, audit plugins, collect signup information, and do other things in a way that would previously have taken days. The communications channel was encrypted with keys that were thousands of bits long.

Game Server Instance Management and Remote Procedure Calls

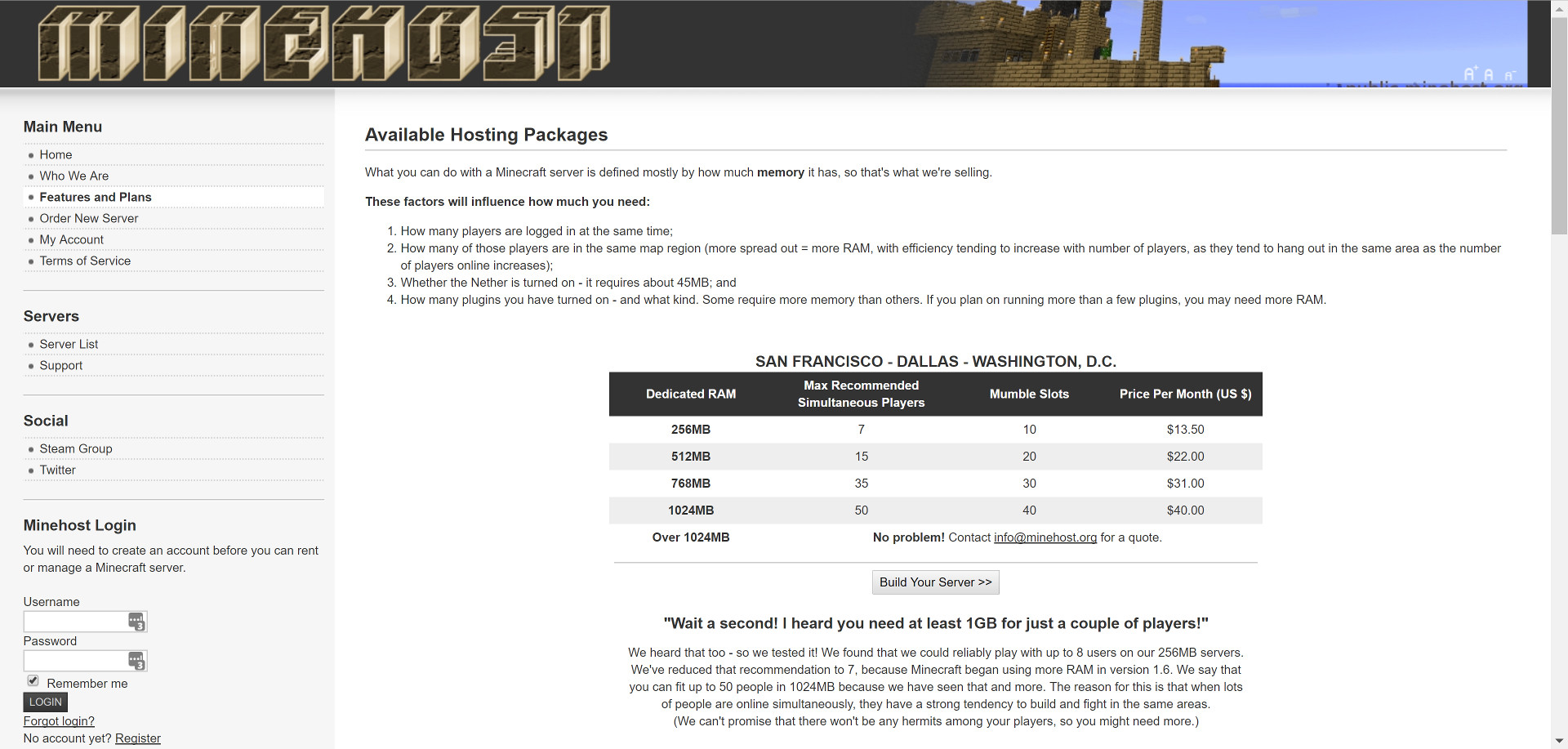

When I ran a Minecraft gaming service provider, it was necessary to create much of the infrastructure from scratch myself, including instance configuration management and remote control. Configuration management systems such as Chef, Puppet, and Ansible are designed for system administrators who need to control entire machines. My application required that, but to a much finer granularity. In addition to handling configuration for the "outer" game server (the physical machine that contained many instances), it had to allow end users to directly control the configuration and operation of their instances through a control panel. I achieved this with PHP, an encrypted REST RPC stack, a series of shell scripts, and a cron job.

Running a GSP has some things in common with running a Web hosting company, but there are some significant differences. With web hosting, you have two main pieces (HTTP and database service) plus some ancillary services (DNS, load balancers, etc). There will also be a control panel of some kind. Most of the Web servers running on a machine are low-traffic, and thus idle almost all the time; so it's reasonable to have hundreds of sites on a single server.

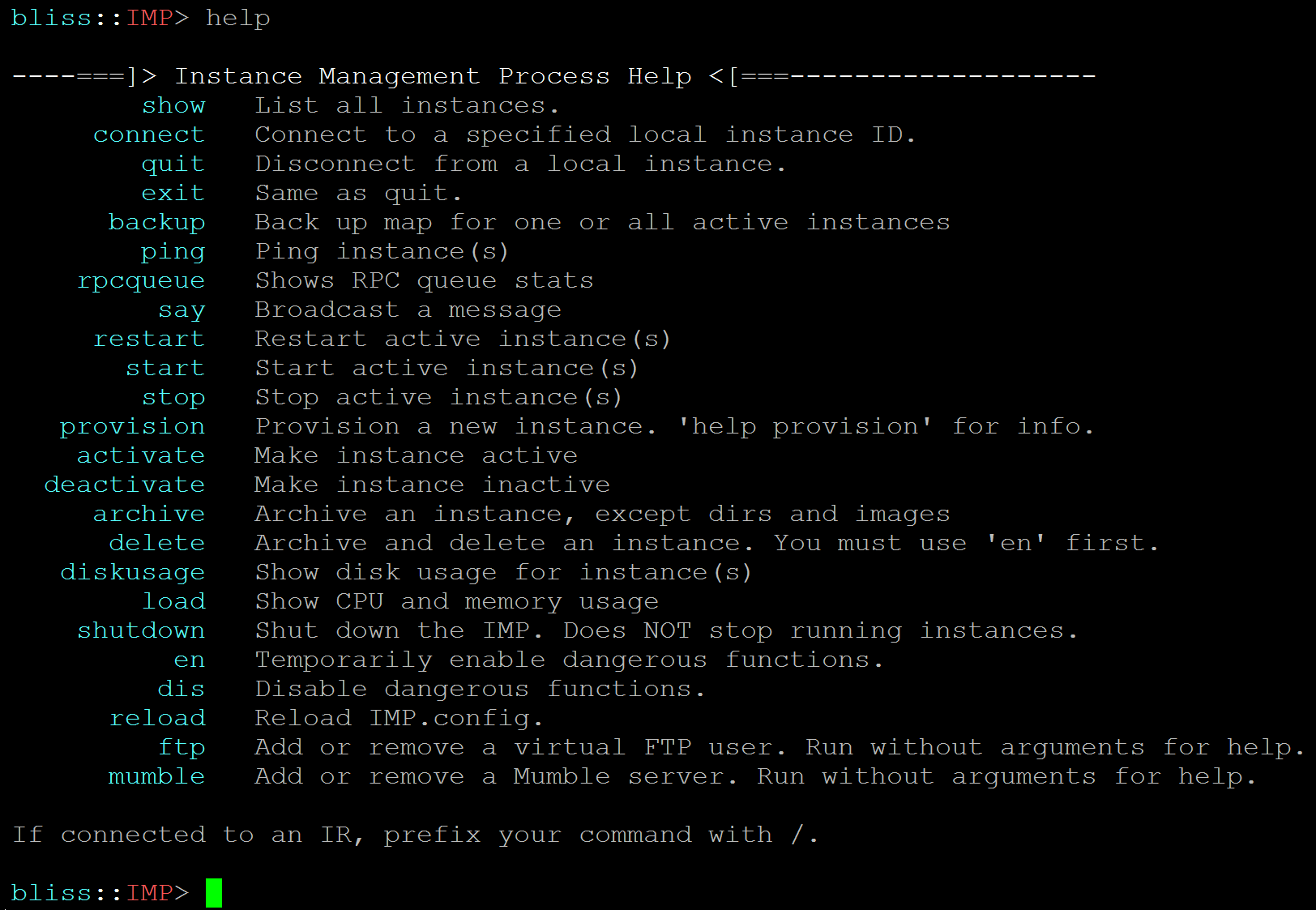

With a game service provider, architectural requirements are much more demanding. Each customer is renting resources (primarily RAM) on a physical machine. In the case of Minecraft, the application is being served from Java, and each server gets its own dedicated RAM. (One Minecraft server cannot cede unused RAM to another, so it's not like running Apache or nginx.) The game constantly simulates a small amount of land, even if no one's logged in, so there is always some load. Each game server has to be controllable via a control panel, and that requires a secure RPC stack. There must also be support for backups and rollbacks, and the control panel has to be able to send commands to the server to effect those and other commands. Game servers have to be controlled by a watchdog process (an Instance Runner) that will restart them if they hang, and each server's watchdog process must itself be launched and periodically monitored by an Instance Management Process (IMP) running on each machine. Additionally, users have to be able to change the password to their SFTP server. In our case, they also had the ability to set up a Mumble server for voice chat.

Front End

- Web server environment: LAMP stack on virtual machines (1 Apache, 1 MySQL)

- CMS: Joomla!

- Template system: Smarty

The main web server had a registration page, and a user control panel. The control panel allowed logged-in users to adjust the configurations of their game servers, as well as rolling back their maps to previous snapshots. RPC payloads were created as PHP objects and then serialized (turned into a flat text format) for transmission. The serialized payloads were then encrypted using GNU Privacy Guard (GPG), the open-source alternative to PGP. Each server in the system had its own key. When a payload was received, it was decrypted according to its originator's public key, and then responded to with another payload which was encrypted in the same manner.

The website presented a significant attack surface, so I signed up to receive security updates from a Joomla! mailing list. I applied any security patches as soon as I became aware of them. Input from web forms was also carefully sanitized. If a field was supposed to return an integer or a float, the code made sure that the input was strictly numeric, and cast to the correct type. If a field contained text, that text was checked for any suspicious characters, and escaped to avoid any SQL injection attacks.

Application Servers

- Server environment: Dedicated

- Provider: SoftLayer (now IBM Bluemix)

- Data centers: 3 (San Jose, CA; Dallas, TX; Washington, DC)

- Machines: Quad-core Intel, dual SSDs in RAID 1 configuration for redundancy

- Bandwidth: Peering with multiple providers in every data center

- OS: Debian Linux

- Stable release only (packages typically make it into Stable after 1+ year in testing branch, with the exception of security patches)

- All servers ran same version/configs as dev box

- All machines updated at the same time, after first verifying updates on dev box

- Instance management: PHP scripts

- Individual application servers managed by Instance Runners (IRs), as many as a few dozen per machine

- All Instance Runners managed by the Instance Management Process (IMP), one per machine

- IMP launched at OS startup by an init script

- IMP responsible for starting active IRs, pinging them periodicially, and killing/restarting any that don't respond; as well as provisioning/deleting instances, configuring Mumble for voice chat, etc. (see screenshot)

- IMP communicates with individual IRs through local IP sockets, guarded by identd

- IRs launch Java processes within chroot jails, and talk to them over standard IO

- Application service: Java

- Network security: iptables

- Application security: Java apps were contained within chroot chails, and local interprocess communication was guarded with identd

Each game server had a master Instance Management Process that ensured that all the server instances that were supposed to be up and running, were. Each server instance had its own watchdog process, called an Instance Runner, that would launch the server in a container (a chroot jail), so as to keep each instance isolated from each other instance, as well as the control software. People could upload custom plugins, and there was nothing to stop a plugin from walking the file system, so it was necessary to make sure they had no access to see anything more than absolutely necessary. The Instance Runner process was also responsible for re-launching its game server if it crashed, which could happen from time to time.

The instance runners allowed the main control process (IMP) to connect to them over a socket. To prevent rogue plugins from discovering this and exploiting it, each process communicating over those sockets used identd, a Linux service that reports the user ID that owns the connecting process. Since the control process and each watchdog had their own user IDs, any connection that wasn't coming from the right user would be dropped, and the violation immediately reported.

Configuration Management

CM was critical to this operation for two reasons. One, multiple application servers had to all be running the same version of the same operating system, with the same applications and system settings (such as firewall rules). Two, customers had to be able to edit their application server settings from our control panel.

- Repository: Subversion (SVN)

- init scripts, firewall rules, skel (default files populated into new home directories), and other files that live in /etc

- Libraries containing business logic

- Instance Management Process, Instance Runner, Java, etc.

- Mutation: Migration scripts

- Written in bash

- When a significant architectural change was rolled out, it was tested in staging, and then applied to each server automatically over rsync and ssh

- Scripts were designed for idempotency, similar to Chef recipes: they could be run once or ten times, and the result was always the same

- Deployment: Files synchronized from SVN or with rsync; remote commands executed automatically on one, multiple, or all hosts over SSH (similar to Chef, Puppet, Ansible, etc)

- Inter-process communication (IPC)

- Between processes on application servers: Local IP sockets, guarded by identd

- Between Web and application servers: RPC stack based on serialized PHP request/response objects delivered over an encrypted channel

- Front-end web server connects to Apache on application server and delivers encrypted payload over REST

- Application server decrypts payload, processes request, assembles a response object, encrypts it, and sends it back to the front-end server

- Examples: Provision new server, retrieve or store a specific instance's configuration files, gather statistics, configure Mumble or FTP, etc.

Botnet Defense

My biggest customer's game server was attacked by a botnet. Hundreds of different accounts logged in at random from hundreds of IPs. If you blocked an account, many more were ready to take its place; and if you blocked an IP, you had the same problem. The weakness of the attack was that the accounts and IPs were used interchangeably. If a botnet user connected from an IP, it was possible to look up all other users who connected from that IP, and then block them, and all the other IPs that they connected from, and all the other users who connected from those IPs, and so on. Six levels of this were all that were required to find all botnet users and IPs. I wrote a PHP script that took only one user or IP as an argument, and then walked the logs to find all associated users and IPs. Once the script was complete, the botnet attack was completely isolated in less than a minute. None of the game servers were ever botnetted after that. If they had been, the attack would've been very short-lived.

Would I do anything differently today?

Yes and no. When you're running a service provider, you have to trust hundreds of moving parts. Anything that's more complicated than it needs to be places the business at added risk of decreased customer satisfaction, reduced signups and retention, and increased workload. Every decision that influences this must be made after carefully analyzing the problem from multiple angles.

- Front end and DB: AWS or DreamHost. The load would not be particularly high, as the focus is on the game servers, not the front end. However, it would be nice to set up a load balancer and two VMs for redundancy. DB service would still be InnoDB tables on MySQL. AWS and DreamHost both have this as a service, so I wouldn't need to manage a separate VM for it.

- Backups: Amazon S3 or DreamObjects. I'd still use rdiff-backup, as it's efficient and quick. DreamObjects are S3 compatible, so there is no vendor lock-in.

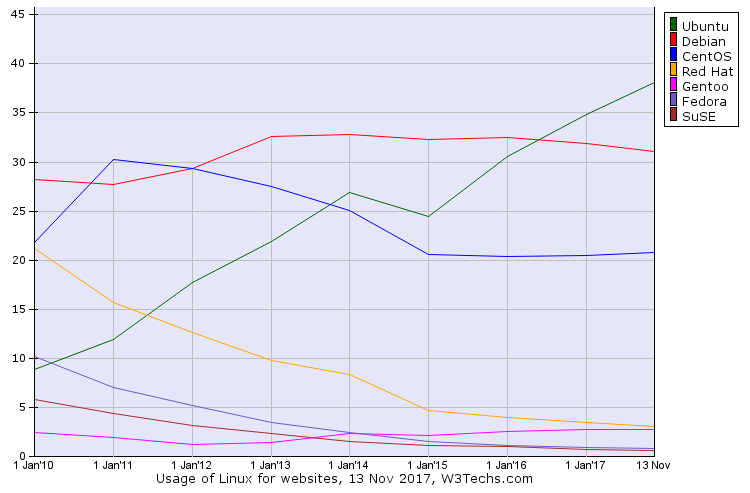

- OS: Ubuntu Server. If I was doing this for an organization that was invested in some other OS, I'd use that instead. However, if I was doing this from scratch, I'd stick with Ubuntu. It's the most popular Linux distro for serving Web sites, having more than quadrupled its market share from 9% to 39% since 2010. This has had a definite impact on community support. Many Docker images and Chef recipes are either Debian/Ubuntu-specific, or will rewrite RHEL/CentOS configurations in the Debian style. The package management system has a fantastic text-mode UI, the packages are new enough to install newer software like Chef 13, and the stable branch is just that. There are no hoops to jump through. It just works.

- Front end: Either Laravel or October CMS, which is based on Laravel. Joomla! is really nice, but it has more functionality than I need, and a large attack surface. I still get security updates from their mailing list. Laravel is more modern, simpler to get into, and easier to understand. It scaffolds authentication for you, meaning that by default you get an auth system that has already been thoroughly tested. October CMS makes it easy to put "code-behind" stuff in the same file as a template (e.g. AJAX calls). You can use a WYSIWYG editor, or write your own HTML. It automates a lot of things you'd do by hand in stock Laravel, so you can get to the business of building a site more quickly. However, there is one caveat: It's different. Files are in different places. Things don't work quite the same. It's a software package unto itself, rather than something you install within Laravel.

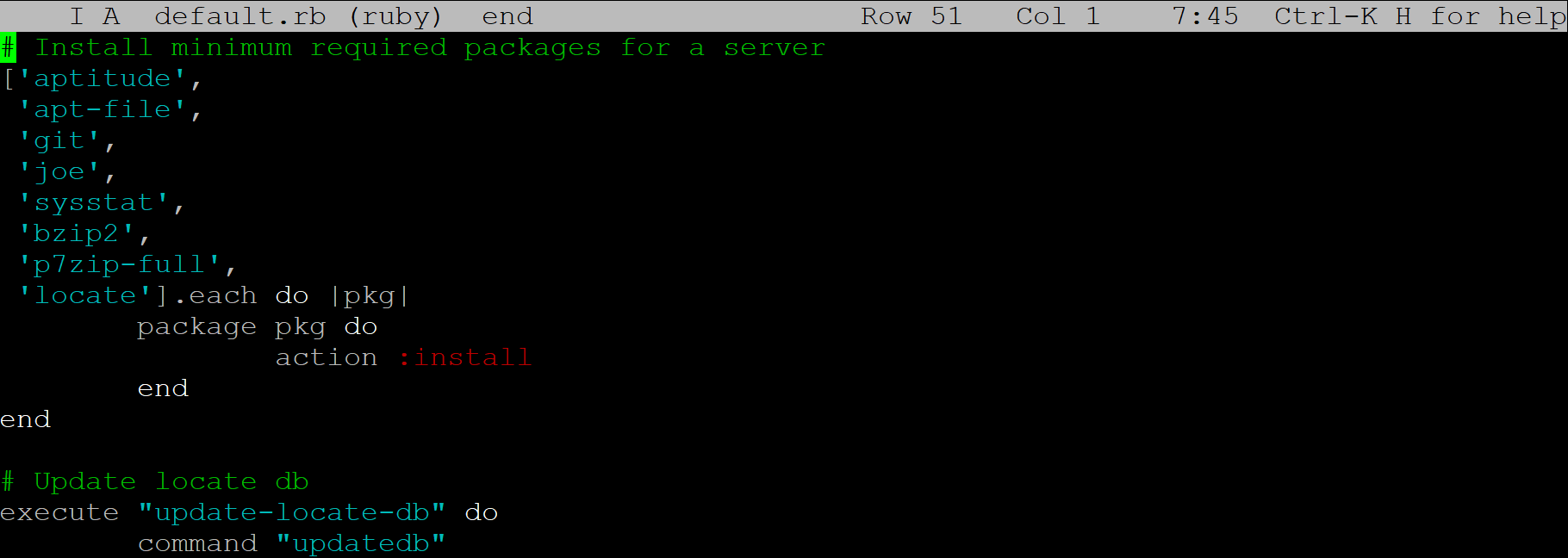

- Infrastructure and configuration management for the machines: Chef or Ansible, and possibly Docker. The in-house rsync/SVN-based scripts I wrote were quite adequate, but it seems everyone is going in the direction of using open-source config management.

- Ansible: I like the "agentless" method, where there is no daemon polling the server, and everything is done over SSH. This seems to me like a very clean way of doing things. Your management server can be behind a NAT firewall with no port forwarding from outside, nice and secure within your private network, and still manage remote servers in the cloud or wherever else.

- Chef: A strong contender, and many companies seem to think it's the best. There are two main drawbacks. Architecturally, Chef servers have to be directly reachable by their clients, which is less than ideal from a network security standpoint. With regards to cost, if you run your Chef server in the cloud, you'll be paying for a lot of CPU usage. Chef is a mashup of many different programs that run all the time, and as a result, Chef servers are constantly under load even when they are doing nothing. You have to pay for that! Nevertheless, it's still a good choice. For networks with many cloud instances, the cost of running a Chef server becomes a relatively small concern. If you're running it on bare metal, it's probably not a concern at all. Chef also enjoys more community support, and is said to scale better.

- Docker: I'll talk more about this below. The "outer" game server management technology (the IMP) would be very easy to Dockerize. The advantage to doing this is not huge, as IMP is already a stable, mature piece of software. Nevertheless, if there was some new feature or security patch, or PHP was upgraded, it would be marginally simpler to deploy in production.

- Configuration management for game server instances: Still bespoke. (This refers to game server configuration files, plugin JARs, etc. — the "outer" server configuration is a different matter.) The control panel is the first place customers visit after they sign up. It needs to make a good first impression, and it needs to keep making that impression every time the customer visits. The longer a control panel takes to load, the jankier it feels. Therefore, it needs to be as snappy and responsive as the game servers themselves. This instills confidence that the service provider isn't going to pack them in like sardines on the cheapest possible hardware. Customers like control panels that load fast, and my encrypted RPC stack met that need very well. It has the following advantages:

- No caching layer. Configuration can be changed by privileged users (customers and their designated moderators) by typing commands into the game's console, or by editing files over FTP. Caching would have required synchronizing these changes in the background, and nearly in real time. It would be another layer of complexity, another "thing to break," a potential source of frustration for customers. It would also require allowing game servers to initiate communications with the front-end Web server, rather than only replying to its occasional queries. This would be a deviation from the security model I designed.

- Minimum dependencies. The RPC stack requires only the PHP interpreter, and the business logic. The only outside maintainer who can break this is the PHP dev team. Outside of major releases every few years, this is unlikely to happen.

- Framework-agnostic. The RPC stack required no integration with Joomla, and would work as well under Laravel with zero internal changes. Because it has no dependencies on any external framework, I never had to install or maintain Joomla on the game server machines just so the RPC stack would run. I also didn't have to worry about upstream changes to Joomla breaking the RPC stack. If I run the stack on top of Laravel 5, I can upgrade to Laravel 6 or even switch frameworks completely, and the RPC stack won't care. Loose coupling is good!

- Bulletproof, extensible, and fast. The RPC stack ran on the game servers with very little "glue logic." The PHP file for that had only a few lines of includes to pull in the business logic, and then another few lines to process the messages and return the responses. It's just about as fast as it can be without rewriting it in a compiled language. The time between clicking the name of a server to edit, and getting a fully populated control panel, was a fraction of a second. This included the entire round-trip time of fetching the configuration from the game server over an encrypted channel.

- Why not Lumen on the game servers? In short, because it would not improve ROI. It isn't faster or more secure than my RPC stack, nor does it do anything useful that the stack doesn't already do. It would add complexity with no accompanying benefit, and be "another thing to break." The existing system has proven itself to be 100% reliable and trouble-free through years of service. There is simply no upside in replacing it.

- Version control: Git. I used SVN because it's what I knew at the time, but Git has obviously outpaced it by far. I've already used Git on open-source projects, and to track my own stuff, so I see no reason not to use it in the future.

- Containers: Probably still chroot, but maybe Docker. Contention over CPU resources was never an issue, even on big server instances with dozens of concurrent players. Memory usage was already constrained by Java, right on the command line. RAM overhead was negligible. The one obvious advantage to using Docker is that it would make it easy to implement immutable infrastructure. With Chef, there is always the possibility of unintentional configuration drift. With Docker, Chef would still be used to bootstrap and configure each machine at the OS level, but the application — game server management processes (and the game server processes themselves) — would be running in Docker. They would not be susceptible to configuration drift in the domain I control (game server management) because they would all be running identical Docker images. They would be susceptible to changing configuration requirements of the game server and its many plugins, but that's a problem whether I use Docker or not. It's worth noting that the game server software almost never changed once it was mature, so concerns about software upgrades are not as pressing a concern as they would be otherwise.

- Server machines: Probably still bare metal, but I'd do some research. I like knowing exactly what I'm going to get. I'd have to conduct a study to find out whether running a GSP from EC2 or some other cloud provider would result in as much predictability as bare metal. Minecraft constantly eats CPU cycles even when no one's logged in, and Amazon likes to charge for CPU cycles. That seems like something that would require close inspection to see if it's economically feasible at all. With bare metal, I always knew precisely what each machine was going to cost per month, and how much bandwidth it would get. I always knew exactly what hardware (SSDs, RAM, processor cores, etc.) went into what I sold. I always knew the latency — always my biggest pain point prior to SoftLayer — would always be excellent. (They were not my first provider. The previous two are why I was willing to suffer a narrower profit margin to host there.) To feel good about using a cloud provider instead, I would have to satisfactorily address all of those points.

Software Development Principles

- Software development methodologies are not plans. They are tools. They should be used because they're the best solution to a specific problem, not "just because." For example, design patterns and anti-patterns can be useful to know about, but they are somewhat generic, and a problem should not be shoe-horned into them when a simpler or more direct approach would produce more sensible code. The Design Patterns book can be kept around in case it might be useful, but it should not be memorized. It's a toolbox — and not the only one in the workshop, either. It's definitely not a plan.

- Not everything benefits from OOP. Object-oriented programming is good for Web apps. I personally can't think of a large project I'd develop in PHP or C# that would not make heavy use of objects. Object-oriented code is extremely good for word processors and spreadsheets. It's usually good for games. However, a lot of code is still written in C, or in C++ but with limited OOP. This would not be the case if C++ was universally a "better C." In many applications — and particularly with kernels and device drivers — it's not desirable to have any extra layers (abstractions, virtual functions) or portability issues (STL, Boost) that further separate the programming language from the assembly language that it will eventually output. (C is often called "machine-independent assembly language," and that's just how many C programmers like it.) The Linux kernel and Git are both written in C because, right or wrong, Linus Torvalds really hates C++. Shell scripts written in PHP and perl often use no objects at all because it would be overkill. There can certainly be a place for C++ in embedded systems programming, which I'll talk about below, but some toolchains only present a limited subset.

- SOLID is usually gold for desktop, mobile and server applications. (Note that I'm specifically saying applications, and not operating systems.) First articulated by "Uncle Bob," these five design principles make it take more time to write code, and less time to maintain it. When adhered to, they remove any fear that extending an existing codebase will break things in unpredictable ways. Code becomes more expressive, less redundant, and more loosely coupled. SOLID is worth memorizing. There will be times when some or all SOLID principles will not help a project, particularly in low level/embedded programming. For anything like a Web or desktop app, SOLID is usually the way to go.

- Embedded development requires different strategies from desktop, mobile, and server apps. This is especially true of memory management. More on that subject here.

Putting Out Fires

After I've been working somewhere for awhile, I usually earn a reputation as someone who can be counted on to fix things that need to be back online ASAP. Let me tell you the story of how I rescued a very important site.

At a company I used to work for, someone else was given responsibility for a very high-traffic website, one used by customers who liked everything to be just so. The company was compelled to change the site's software for what I think are valid business reasons. It was legacy software, we didn't use it on any other site, and we didn't want to spend resources on keeping it updated when we already specialized in the software we wanted to replace it with. Nevertheless, it was a sensitive issue.

The software change was given to someone with no technical background, and it didn't go smoothly. It had been launched on a Sunday night, which I would never have done. (Monday through Wednesday? Yes. Thursday? Maybe. Friday through Sunday? Nope! Too hard to get a hold of people if anything goes wrong.) By the time I arrived on Monday, the site had been down for about twelve hours, and the users were beyond furious. They were not at all shy about communicating their true feelings to us. Some of them emailed the CEO! The site's Alexa ranking could be seen to dip on that day, and to stay there for months afterwards.

The people who had done this were huddled around one of their computers, unsure of what to do. I immediately suspected what was wrong, and I asked if I could fix it for them. They said yes. I flagged down a trusted DBA, and to neither of our surprise, the database server was thrashing badly. I took the site down, replacing it with a temporary page apologizing for the outage, and we set to work. I had the DBA modify the InnoDB table settings, optimizing the amount of RAM set aside for caching and so on, according to a best-practices white paper published by NetApp. I also had him convert several tables from MyISAM to InnoDB, and add a few indexes as well. I knew from experience that this would ease the load on the DB server.

It took an hour or so to convert the tables from one storage engine to another, and to rebuild the indexes. When this was done, I brought the site back online, and it loaded normally. The users recognized this immediately, and began to log in en masse — thousands of them every minute. The site took the traffic without a hitch. Pages were loading nice and fast. The users hated the new software, but there was nothing I could do about that. My responsibility was to make it work, and that's just what I did.

Dev Lab

I develop websites with a headless Ubuntu server, and either an Ubuntu workstation or a Windows 10 laptop. To make everything work smoothly, there are a few things I have to do for each one.

- Internal DNS. I add an A record to a local zone I have set up in bind9. Any machine I develop is set up to use my server for DNS. If I type http://sitename on one of these machines, I'll see whatever site I'm working on.

- Samba. I often use Sublime Text on my Windows laptop. To facilitate this, I add a share that points directly to the site user's home directory. All files are forced to be written as that user, regardless of what my Windows login name is.

- Apache. I copy one of the files in

/etc/apache2/sites-availableand edit a few lines. I usempm-itkto run each Apache instance under the uid/gid of the same user that owns all the PHP files. That way, I don't have to set up weird directory permissions. - Laravel. Assuming the site is made in Laravel, I have a script that I use to generate a skeleton version of the site. This includes routes, a page controller, removal of

welcome.blade.php, an index page, layout, header with nav bar, and empty footer. All the CSS/JS stuff is taken care of. - Git. I have a separate git server running on EC2. Each website has its own read-only user.

- Chef. I use Chef to bootstrap production servers, and to deploy the source from the git server. For Laravel-based sites, one of the recipes creates a symlink that activates the production version of

.env.